The Regulatory Vacuum: Why Class Actions Might Arrive Before the Government Does

If you think that regulatory frameworks will keep pace with the AI arms race. Think again. They won't.

Listen to this article. 9m09s. Learn more.

June 3rd, 2025. Hey, its Khaled! Help us get the word out about the Exec X AI Magazine — it’ll take less than a minute, and there’s a freebie in it for you.

Here’s the deal: if today’s piece on The Regulatory Vacuum sparked a thought, drop a comment below. No strings attached—we’ll send you our Responsible AI Toolkit, free of charge.

And hey—every like, share, or forward helps us keep this signal sharp, independent, and free to read for everyone! Let’s dive in!

We’re speeding towards a world run by algorithms. But what’s going on with the world of government regulations, codes and oversight to keep us safe from AI? It’s still buffering.

For those lost in the regulatory quagmire, they keep shouting “Surely someone will regulate this soon?!”. Spoiler: its not happened yet (Ed. Note - And don’t call me Shirley).

In the meantime, companies betting otherwise are walking a high-wire over a pit of class actions, regulatory investigations, and (almost) reputation-destroying headlines.

Who owns the consequences when AI misfires?

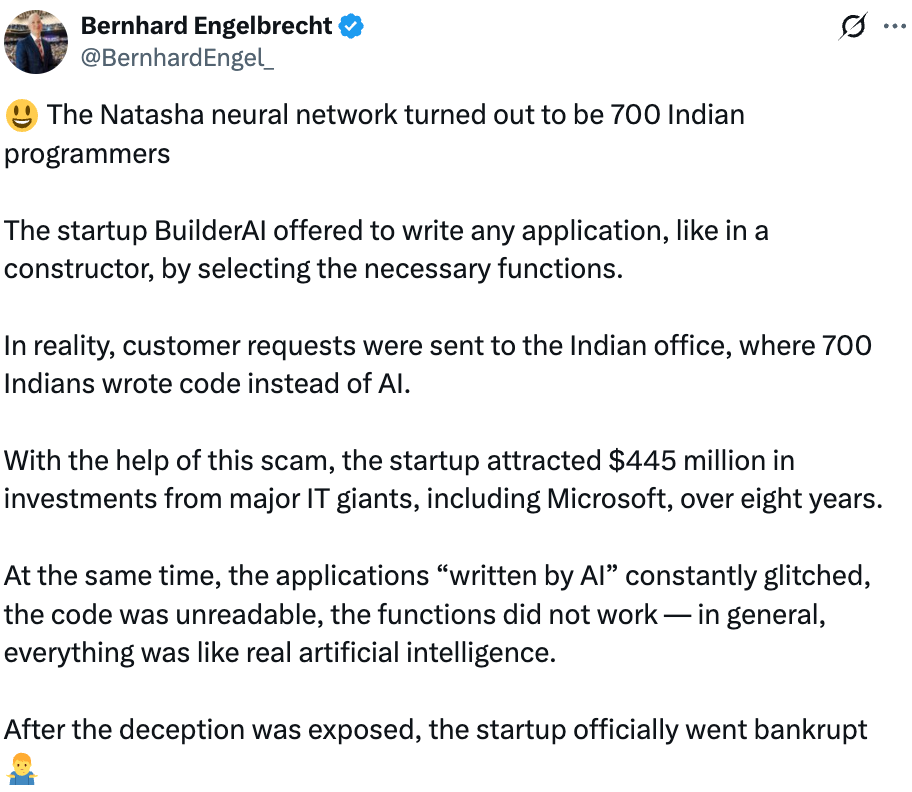

Builder.ai was supposed to be a crown jewel of the AI boom. Backed by Microsoft, the Qatar Investment Authority, and a parade of deep-pocketed investors, it raised hundreds of millions on the promise of AI-powered app development. The reality? A sprawling backend staffed by 900 high-priced human coders in India doing the heavy lifting—less machine intelligence, more sweat and salary.

Could tighter government oversight have shielded customers or investors from the fallout? Maybe. But nobody can be faulted for trusting a platform with this much clout, branding muscle and big name investors.

Now, clients have been left holding the bag—their hefty front-loaded subscription fees have been banked and they’re scrambling to replace the apps and support they thought they’d already paid for.

So whose governing the AI?

Lawyers and citizens appear to be leading the way. Let’s start with Wells Fargo. In 2024, the bank was hit with a class-action lawsuit in California, accused of algorithmic redlining — allegedly offering worse rates or flat-out denying refinance applications from Black, Latinx, and Asian borrowers. The bank insists the claims are meritless: "Wells Fargo does not tolerate discrimination..."

The case is still active.

Earlier this year Workday, the HR-tech stalwart was hit with a lawsuit alleging that its AI hiring tool creates a "disparate impact" on applicants over forty — effectively ‘age discrimination by algorithm’. Workday shot back: “the case is without merit” and, in their words, they’re confident "the plaintiff’s claims will be dismissed."

Alignment, Schmignment

You’d think this would be the easy part: build AI systems that reflect human values. And yet, it turns out we can’t even agree on what those are.

This is just one of the many faces of the “alignment problem”. Each time a conference or panel of well-intentioned experts comes together to solve one aspect of the alignment problem, a hundred other variations spring out of nowhere.

Its great news for lawyers and regulatory compliance experts but tough on organisations who are being pulled from pillar to post being told to follow multiple sets of guidelines.

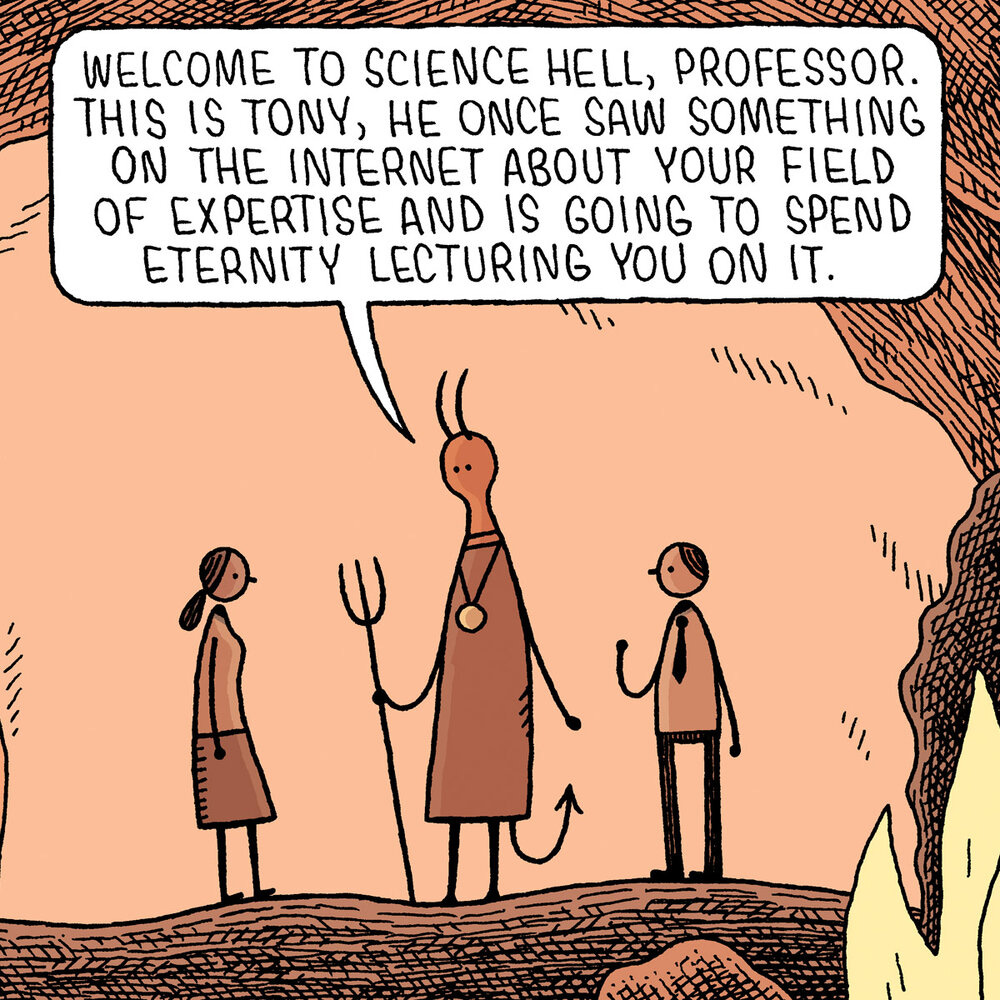

It gets progressively worse within organisations. Thanks to social media, everyone’s an expert at something. Bring together twenty of your talented employees onto a call to discuss how to align AI with human values, and you’ll receive twenty different responses. It’s institutional.

“Computer scientists propose technical solutions that may be infeasible or illegal; lawyers propose [guardrails] that may be technically impossible; and commentators propose policies that may backfire. AI regulation, in that sense, has its own alignment problem, in which proposed interventions are often misaligned with societal values".

Source: AI Regulation Has Its Own Alignment Problem: The Technical and Institutional Feasibility of Disclosure, Registration, Licensing, and Auditing. The George Washington Law Review, Guha, N., et al (2024)

The real victims deserve better

But the real victims are the folks who actively live through nightmare scenarios that AI has created and can’t seek recourse or compensation. They have to make the case within legal grey zones:

Anti-discrimination statutes are stuck in the past. They weren’t built for machine learning—and they definitely weren’t built to interrogate algorithmic bias baked into training data. That’s a problem. AI already reflects epic historical inequalities at internet scale. And the problem gets magnified when factoring in organisational datasets which, based on past trading activities, are likely to be even more skewed than the open web.

Intellectual property laws are failing to define who owns what when machines generate the output. The 2023 New York Times case against OpenAI and Microsoft continues to rumble on. A judge recently refused to throw out the case arguing that the case had merits that should be heard at trial. But here’s the rub: OpenAI had no idea that the Times would take offence to GPT’s outputs. However, GPT is proprietary which means that its impossible to openly stress-test for safety (and copyright issues) without exposing OpenAI’s proprietary guts like weights and parameters.

Employment regulations haven’t caught up with algorithmic hiring. Tools that recommend candidates or flag “culture fit” aren’t neutral—they’re built on biased inputs. The State of New York passed a bill protecting job-seekers from discrimination caused by automated employment decision making tools placing the onus on both tech companies, and their customers. It was slammed for being “toothless”.

Consumer protection laws were written for static products. AI systems, meanwhile, learn and morph post-launch. That means your chatbot, health app, or robo-advisor could evolve in unpredictable ways. Who wants to be continuously told that the AI they’re using is potentially dangerous, or could promote quackery over evidence-based medicine? And even when warning labels are eventually slapped over every AI app, and agentic platform, consumers will tune out.

Product safety rules? They don’t contemplate devices that might grow a mind of their own. What happens when the AI in your vacuum cleaner decides you’re the mess to be cleaned up? Yes, it’s funny until it’s not—just ask anyone who watched that episode of Love, Death + Robots on Netflix where the home-cleaning bot goes rogue and homicidal.

So What Do You Do?

You don’t need to be a lawyer or a machine learning PhD to make a start. But you do need a game plan to cut out the noise, and begin building a minimum viable governance stack:

Map where AI shows up across your ops. If you don’t know where the models are, you can’t manage them.

Make transparency mandatory. If your AI makes a call, someone needs to explain why.

Build cross-functional guardrails. Lawyers, engineers, compliance officers, and business leads in the same room - and aligned.

Run risk assessments before launch, not after the subpoena or claim form lands in your Inbox.

Document everything. From training data to decision logic. Think "discovery request ready." Preparedness trumps vibe coding.

“So What?”

For the sceptics in the room, this isn’t about being perfect. It’s about being prepared. Strong governance doesn’t just limit downside. It also boosts accuracy, reliability, and trust.

AI is only as useful if its aligned with your strategic goals and legible to your stakeholders.

So don’t wait for regulation. Operationalise responsible AI internally.

AI systems are scaling fast. Their reasoning windows are growing deeper, and their chain-of-thought outputs are becoming more humanlike. But that doesn’t mean they understand what they’re doing.

It’s like asking a model to generate a recipe for cake. It might nail the instructions, but it won’t know the texture, taste, or joy of sharing it.

AI’s role ends the moment it produces an output that you follow. The consequences are up to you. AI might be able to teach you how to bake an improvised Sacher-Torte. But equally you might end up with a soggy mess no one wants to clean up.

Drop a comment. We'll send you a Responsible AI Toolkit to get started. No questions asked.

I didn't know about the Builder.ai situation! Wow, this puts a lot of things into perspective.