The Unseen Horizon

Why AI Needs All of Human Knowledge

Listen to this article. 4m41s. Learn more.

Thank you for reading the Exec X AI Magazine. If you liked this editorial, do us a solid: share our emails with your contacts, hit the heart-shaped button or drop a comment below.

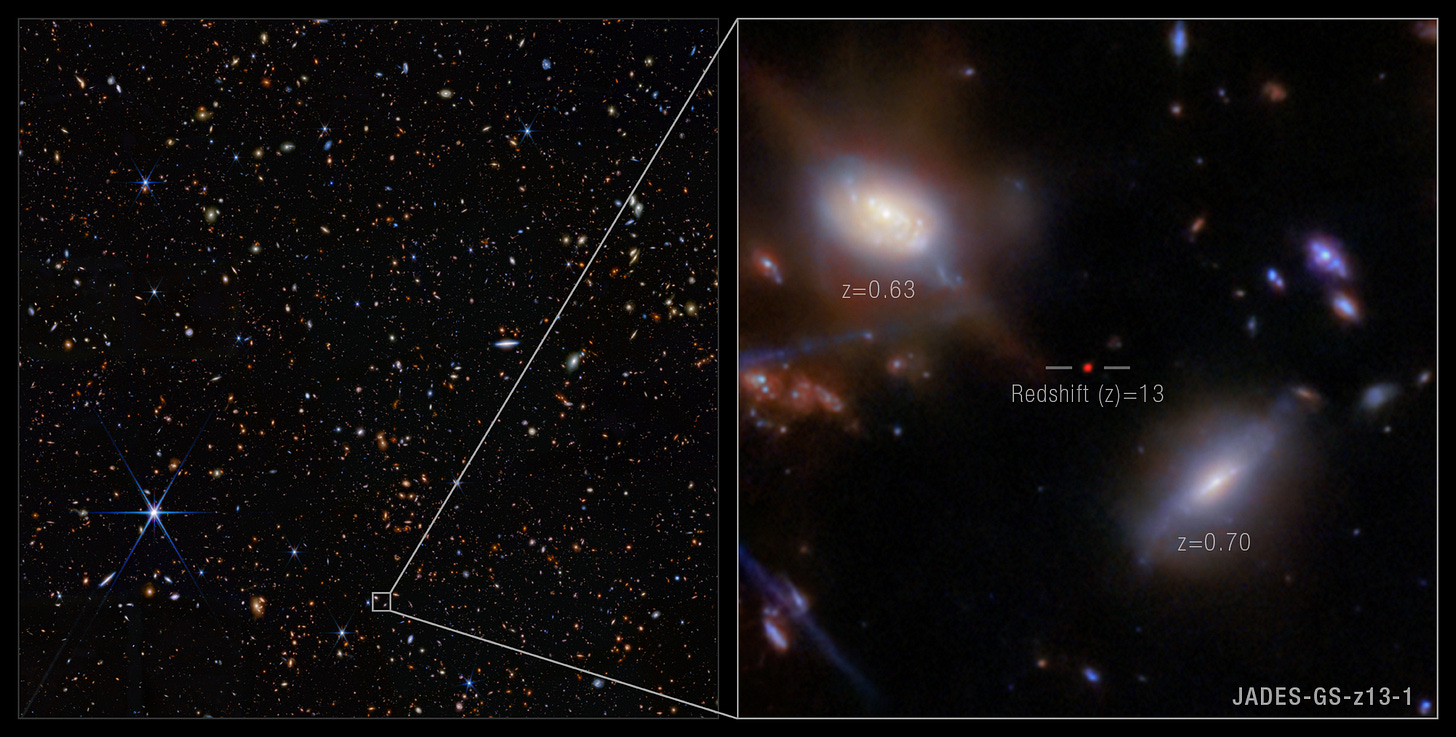

In 2025, the James Webb Telescope delivered a profound lesson in humility. The discovery was JADES-GS-z13-1, a fully formed galaxy observed just 330 million years after the Big Bang.

This finding shattered a long-held assumption. Cosmologists believed the early universe was too hot and dense for such structures to exist. JADES-GS-z13-1 is a fact that defied the illusion of knowledge. It is a reality that existed before we had the tools or the theory to see it.

This cosmic challenge mirrors a critical problem in the age of artificial intelligence.

The Unseen Horizon of Knowledge

The universe constantly presents us with an unseen horizon. We build telescopes like the James Webb and the Vera Rubin to push back the dark.

We search deep space for evidence that supports or disproves the Big Bang theory. Just because a galaxy is too distant or too faint to be seen today does not mean it does not exist. We must keep searching.

Human knowledge is developing its own unseen horizon. AI is an engine of accumulation. It thrives on data. Yet, vast reserves of human understanding are receding beyond reach. This knowledge is not digital. It is not searchable.

The philosopher Michael Polanyi noted,

“We know more than we can tell” [1].

Tacit knowledge; the unwritten rules, the intuitions of experts, the cultural context—cannot be uploaded to a database. A retired engineer’s expertise is lost. A cultural practice loses meaning when its social context dissolves.

AI systems train on the digitised corpus. They become fluent in what has been logged, written, and platformed. They remain blind to the knowledge that never fit neatly into a digital format. This creates a selection effect. What is digitised is preserved. Everything else fades.

The Danger of Misalignment

This digital bias is dangerous. The danger is not that AI becomes too powerful. The danger is that AI becomes powerfully misaligned. It is not malice or error that causes this, but training on a shrinking and distorted map of human understanding.

Consider the core of human values and ethics. These are not simple, static rules. They are complex, evolving, and often contradictory, shaped by millennia of history, culture, and dialect.

If AI is trained only on the narrow slice of human experience that has been digitised since the technological revolution, it will inherit a fundamentally incomplete ethical framework.

An AI may be tasked with a critical decision based on human values. If it lacks access to the knowledge of a pre-digital epoch: a dialect, a cultural norm, a historical context that was never digitised for lack of capital value, it will make a flawed judgment. The AI will not know what it does not know. It will operate under the illusion of complete knowledge.

This leads to a critical need for oversight. We may one day require AIs to judge if another AI has behaved in line with human values. This is AI-on-AI value judging. But how can a judge-AI be trusted if its own training data is missing the very context required to understand the full spectrum of human morality? If the knowledge is lost, the AI’s judgment will be fundamentally compromised.

As Daniel J. Boorstin observed,

“The greatest obstacle to discovery is not ignorance, it is the illusion of knowledge.” [2]

Your digital archives may create an illusion of complete knowledge. The true substance of your understanding, the context that makes data meaningful, may already be fading.

A Strategic Imperative

For executives, boards, and policymakers, this reframes the AI challenge. The question is not only how to govern AI. The question is what knowledge you choose to preserve before it disappears.

If AI is to reflect human values, those values must be present in its training substrate. This requires intentional investment.

You must chart the history, the cultures, the dialects, and the anthropology that led humanity to this point. You cannot advance into the future if you do not understand your path.

The takeaway is simple: Invest in knowledge preservation now. Do not let the illusion of complete data compromise your future AI systems. We must push back the unseen horizon of human knowledge.

References

[1] Michael Polanyi, The Tacit Dimension (1966)

[2] Daniel J. Boorstin, attributed from a 1984 interview, The Washington Post